How Does Tagger (TAG) Enable Data Annotation? An Analysis of Decentralized Data Annotation and Validation Mechanisms

In today’s AI industry, data annotation often accounts for a major share of development costs. Yet traditional centralized platforms face problems such as data silos, low efficiency, and opaque revenue distribution. Tagger aims to address these pain points through a decentralized architecture, making data production more open, efficient, and verifiable.

From the perspective of blockchain and digital assets, Tagger’s core value lies in transforming “data” into an asset that can be owned, verified, and traded, while using token incentives to drive global collaborative production. This means data is not only a resource for AI training, but also an important part of the Web3 economy.

Overview of Tagger’s (TAG) Data Annotation Mechanism

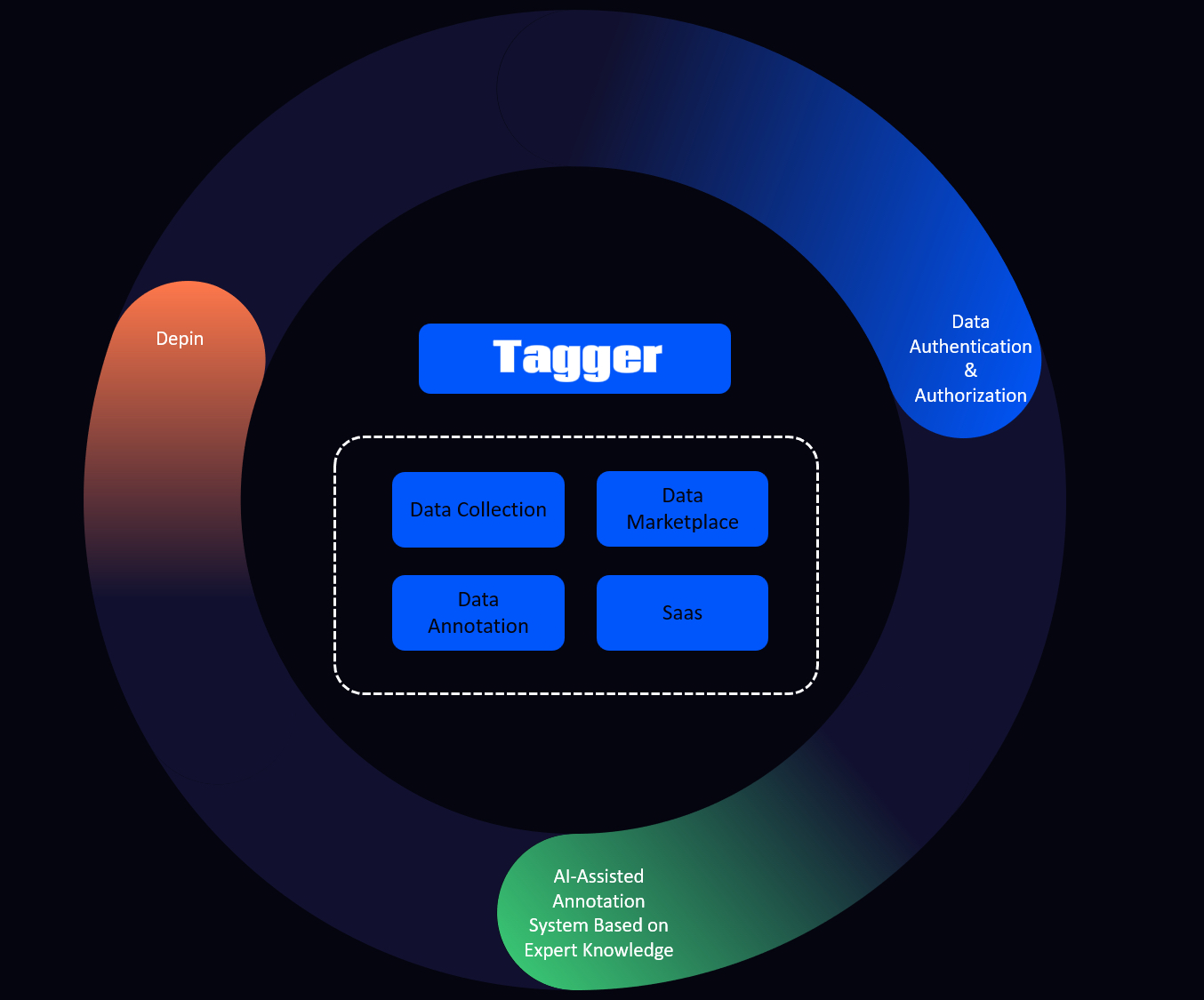

Tagger’s data annotation mechanism can be understood as a “decentralized data production system.” Its core goal is to convert raw data into structured data assets that can be used to train AI models. The entire system is built around four stages: data collection, annotation, validation, and delivery, forming a complete data processing workflow.

In its mechanism design, Tagger breaks data production into several modules, including data collection, annotation, and validation. Each module is completed collaboratively by different participants, preventing any single institution from controlling the entire process. This distributed structure not only improves efficiency, but also strengthens the system’s resilience.

At the same time, Tagger introduces AI-assisted tools, such as AI Copilot, into the annotation process, allowing ordinary users to complete complex tasks as well. This model of human-machine collaboration significantly lowers the barrier to professional data annotation, enabling the network to attract more participants and rapidly expand the scale of data supply.

Overall, Tagger’s annotation mechanism is not simple crowdsourcing. It is an integrated system that combines blockchain-based ownership verification, AI assistance, and incentive mechanisms, providing a new infrastructure for AI data production.

Source: tagger.pro

How Tagger (TAG) Distributes Data Tasks: Crowdsourced Annotation and Task Allocation Mechanisms

In the Tagger network, data task distribution is the core link between demand and supply. Data requesters, such as AI developers or enterprises, can publish annotation tasks on the platform and set rules, budgets, and quality requirements. The system then breaks these tasks into multiple subtasks and assigns them to different participants for execution.

The task distribution process usually incorporates intelligent matching mechanisms. The platform assigns tasks to the most suitable nodes based on task type, data category, and participant capability. For example, image annotation tasks are prioritized for annotators with relevant experience, improving overall efficiency and accuracy.

In addition, Tagger scales through a crowdsourcing model. Unlike traditional outsourced teams, a decentralized network can mobilize users around the world to participate in tasks at the same time, greatly accelerating data processing. This model is especially well suited to AI projects that require large-scale data handling.

During distribution, smart contracts can also automatically manage task execution and payment. Once a task is completed and validated, the system can automatically release rewards, reducing manual intervention and improving overall efficiency.

How Tagger (TAG) Validates Annotation Results: Data Validation and Quality Control Mechanisms

Data quality is critical to AI training performance, so Tagger introduces a multi-layer validation mechanism after annotation is completed to ensure data accuracy and consistency. The validation process usually does not rely on a single node, but is completed through multi-party collaboration.

First, the system uses multi-annotator consistency validation. The same data is independently annotated by multiple participants, and the result is accepted only when their outputs are consistent or close to one another. This mechanism can effectively reduce the impact of individual errors.

Second, Tagger introduces AI-assisted detection tools to automatically check annotation results. For example, models can judge whether annotations are logically consistent or contain obvious errors, improving the efficiency of overall quality control.

In addition, some high-value data may also involve reputation mechanisms or staking mechanisms. Annotation results from high-reputation nodes carry greater weight, while low-quality behavior may lead to penalties. This design encourages participants to maintain high-quality output through economic incentives.

How Tagger (TAG) Uses Annotated Data: AI Model Training and Data Applications

After annotation and validation are completed, the data enters the actual application stage, where it is mainly used for AI model training and optimization. High-quality annotated data can significantly improve model accuracy and generalization, so this stage determines the final value of the entire system.

In machine learning workflows, annotated data is usually used to train supervised learning models. For example, image classification models need large amounts of labeled data to learn features, while speech recognition systems rely on accurate transcription data. The data provided by Tagger can be used directly in these scenarios.

Beyond model training, the data can also be used for model evaluation and optimization. For example, testing with annotated data can help assess model performance and further adjust parameters. This makes Tagger’s data not only a training resource, but also an important part of the AI lifecycle.

In addition, Tagger supports data trading and licensing, allowing data to circulate across different applications. This structure transforms data from a one-time resource into a reusable asset, further increasing its economic value.

Performance and Efficiency Analysis of Tagger’s (TAG) Annotation Mechanism

From a performance perspective, Tagger’s core advantage lies in its scalability. Through a decentralized network, the system can dynamically increase the number of participants based on demand, allowing it to handle data processing tasks of different sizes. This elastic scalability makes it suitable for large-scale AI projects.

The introduction of AI-assisted tools is also an important factor in improving efficiency. Through pre-annotation and automated detection, the system can reduce manual workload and allow annotators to focus on key judgments, thereby increasing overall production efficiency.

However, the decentralized structure can also introduce certain delays. For example, multi-party validation improves quality, but it also adds processing time. As a result, the system needs to strike a balance between efficiency and accuracy.

Overall, Tagger’s performance depends on its task allocation algorithms, validation mechanisms, and participant scale. As the network grows, its efficiency is expected to improve further.

Advantages and Potential Limitations of Tagger’s (TAG) Data Annotation Mechanism

Tagger’s main advantages lie in its openness and incentive mechanism, which allow users around the world to participate in data production and quickly expand data supply. At the same time, blockchain-based data ownership verification and traceability also strengthen data credibility.

In addition, AI-assisted annotation tools lower the professional barrier, allowing non-specialist users to participate in high-quality data production. This is important for addressing the shortage of specialized data.

However, this model also faces certain challenges. For example, uneven participant skill levels may affect data consistency, and quality control is more complex in a decentralized environment. In addition, task coordination and management costs are higher than in centralized systems.

One common misconception is that Tagger is merely a “crowdsourcing platform.” In reality, it is closer to a complete data economy system, covering data production, validation, circulation, ownership verification, and other stages. Its complexity and potential go far beyond the traditional model.

Conclusion

Tagger (TAG) combines blockchain, AI, and crowdsourcing mechanisms to build a decentralized data annotation and validation network. Its core innovation lies in breaking down the data production process and distributing it among global participants, while using validation mechanisms and incentive systems to ensure data quality.

This mechanism not only improves data production efficiency, but also provides a sustainable data supply method for AI development. As data becomes a core resource for AI, the decentralized data infrastructure represented by Tagger is becoming an important direction in the convergence of Web3 and AI.

FAQ

How does Tagger (TAG) ensure data annotation quality?

It safeguards data accuracy through multi-annotator consistency validation, AI-assisted detection, and reputation mechanisms.

How is Tagger’s data annotation different from traditional platforms?

Tagger uses a decentralized crowdsourcing model combined with blockchain-based ownership verification and incentive mechanisms, while traditional platforms are controlled by centralized institutions.

What role does TAG play in the data annotation process?

TAG is used to pay task fees and incentivize participants. It is the core driving force of the entire data production network.

What scenarios is Tagger’s data mainly used for?

It is mainly used in AI model training, data analysis, data trading, and related application scenarios.

Is Tagger suitable for large-scale data processing?

Yes. Its decentralized structure can dynamically expand the number of participants, making it suitable for large-scale data processing tasks.

Related Articles

The Future of Cross-Chain Bridges: Full-Chain Interoperability Becomes Inevitable, Liquidity Bridges Will Decline

Solana Need L2s And Appchains?

Sui: How are users leveraging its speed, security, & scalability?

Navigating the Zero Knowledge Landscape

What is Tronscan and How Can You Use it in 2025?