Claude is what? Costs, features, Claude Code, Cowork, and a complete analysis of differences with ChatGPT — 2026 Anthropic's most detailed guide.

This article comprehensively dissects Claude’s features, pricing plans, model selection, Claude Code, Cowork, and the MCP ecosystem, from tool usage to the story behind Anthropic, written for anyone wanting to understand Claude.

(Background: The judge supports Anthropic, prohibiting the Department of Defense from penalizing Claude with a “supply chain risk label.”)

(Background supplement: Anthropic AI Economic Index thousands of words report: Automated trading workflow frequency doubled, Claude is transitioning from a tool to a life assistant.)

Table of contents

Toggle

- Anthropic Valuation

- From Claude 1 to Opus 4.6: An Evolutionary History of AI

- Is the free version sufficient? A complete breakdown of Claude’s pricing

- Consumer plans

- Enterprise plans

- API billing

- More than just chatting: A complete tutorial on Claude’s core features

- Feature 1: Projects

- Feature 2: Artifacts

- Feature 3: Memory

- Feature 4: Extended Thinking

- Claude Code: The terminal tool that engineers are addicted to

- Claude Cowork: An AI agent for non-coders

- MCP: The USB-C of the AI world

- Developer building blocks: Claude API

- Claude vs ChatGPT vs Gemini: Who to choose

- Who is Anthropic: A $380 billion empire built by defectors

- Constitutional AI: Giving AI a constitution

- Financing and numbers

- Safety is not a restriction, it’s a moat

- .2026/3/27

- .2026/3/26

- .2026/3/24

- .2026/3/22

- .2026/3/20

What is Claude? Why is everyone talking about it recently as it seems to be surpassing ChatGPT? You might have seen people on social media using it to code, conduct research, or produce reports, but you remain unsure about what sets it apart from ChatGPT and whether you should spend money on a subscription. This article aims to address these questions. (You can jump to the corresponding introduction based on your needs.)

First, here’s the simplest definition: Claude is an AI assistant developed by Anthropic, available in four usage modes: web version, desktop app, terminal tool, and API. It can hold conversations, write articles, code, analyze documents, search the web, process images, and execute multi-step tasks directly on your computer.

Anthropic Valuation

As of March 2026, Claude’s parent company Anthropic is valued at $380 billion, with annual revenue exceeding $14 billion. Four major tech giants—Amazon, Google, Microsoft, and Nvidia—are investors. Just the Claude Code (developer terminal tool) product alone generates annual revenue of $2.5 billion, with commercial subscriptions growing fourfold since the beginning of the year.

This article will approach from the most practical perspective: what it can do, how much it costs, how to choose a plan, and which derived products are suitable for whom, before returning to Anthropic’s story to explain why Claude has taken a different path from other AI tools.

From Claude 1 to Opus 4.6: An Evolutionary History of AI

To understand Claude’s product line, you need to first grasp Anthropic’s model naming logic.

In March 2024, Anthropic established a three-tier naming system when releasing Claude 3: Opus (the most powerful), Sonnet (balanced), Haiku (the fastest). These three names correspond to three forms of literature (artistic works, Shakespearean sonnets, haikus) from complex to simple.

The underlying logic of this naming system is: different tasks require different levels of intelligence. You wouldn’t ask a PhD student to check the weather, nor would you ask an intern to write a legal contract. Opus is the PhD, Sonnet is the manager, and Haiku is the assistant.

Here is the complete evolution timeline of Claude:

| Time |

|---|

| Model |

| Key Breakthrough |

| — |

| March 2023 |

| Claude 1 |

| First version, invite-only |

| July 2023 |

| Claude 2 |

| First public release, 100K context window |

| November 2023 |

| Claude 2.1 |

| Context window doubled to 200K tokens (about 500 pages) |

| March 2024 |

| Claude 3 (Opus / Sonnet / Haiku) |

| Three-tier naming established, added image understanding capability |

| June 2024 |

| Claude 3.5 Sonnet |

| Performance surpasses the previous generation’s strongest Opus 3 |

| October 2024 |

| Claude 3.5 Sonnet v2 + Haiku |

| “Computer usage” functionality publicly tested |

| February 2025 |

| Claude 3.7 Sonnet |

| Introduced “Extended Thinking,” allowing the model to reason before answering |

| May 2025 |

| Claude Sonnet 4 + Opus 4 |

| Significant improvement in coding capabilities |

| October 2025 |

| Haiku 4.5 |

| Fastest model upgrade |

| November 2025 |

| Opus 4.5 |

| Optimization of coding and work tasks |

| February 2026 |

| Opus 4.6 |

| 1 million token context (beta), Agent Teams |

| February 2026 |

| Sonnet 4.6 |

| First to surpass the previous generation Opus in code evaluation |

Two noteworthy milestones.

First, the 1 million token context window. Opus 4.6 can handle about 1 million tokens of content at once, equivalent to reading 10-15 books in one go. This means you can provide an entire codebase, a complete legal contract, or a year’s worth of financial reports for Claude to analyze at once.

Second, Agent Teams. Opus 4.6 introduces the ability for multiple Claude agents to collaborate simultaneously. In plain terms, while previously you could only chat with one Claude, now you can have a group of Claudes working separately: one conducting research, one writing, one proofreading, and then summarizing the results for you.

These two breakthroughs signify that Claude is evolving from a “chat tool” into a “work system.”

Is the free version sufficient? A complete breakdown of Claude’s pricing

Claude’s pricing structure is divided into consumer plans and enterprise plans, plus usage-based API billing. Here is the latest complete comparison as of March 2026.

Consumer Plans

| Plan |

|---|

| Monthly Fee |

| Available Models |

| Core Features |

| — |

| Free |

| $0 |

| Sonnet 4.5 |

| Basic conversation, image analysis, web search, Projects, Artifacts, Memory |

| Pro |

| $20/month (annual payment $17/month) |

| Opus 4.6 + Sonnet 4.6 + Haiku 4.5 |

| Claude Code, Cowork, Research, all features unlocked (includes API usage quota) |

| Max 5x |

| $100/month |

| Same as Pro |

| 5 times the Pro API usage quota, priority access |

| Max 20x |

| $200/month |

| Same as Pro |

| 20 times the Pro API usage quota, highest priority access |

Claude Code (terminal development tool) and Cowork (desktop agent) are developer tools billed on an API basis and are independent of the web subscription system.

What can the free version do? As of February 2026, Anthropic has opened up Memory, Projects, and Artifacts—features previously available only to paid users—to free users.

You can use the free version to write articles, analyze images, organize data, and perform web searches. The only limitation is that there is a cap on usage (dynamically adjusted based on conversation length and frequency), and you can only use Sonnet 4.5, not the most powerful Opus.

Is Pro worth it? For $20 a month (about NT$640), you unlock all models, Claude Code (terminal development tool), Cowork (desktop agent), and in-depth research features.

Who is Max suitable for? If you are a heavy user who spends several hours daily using Claude Code for coding or require substantial use of Opus 4.6 for complex analysis, the Pro quota may not be enough. Max 5x ($100/month) or Max 20x ($200/month) offers larger usage quotas and the highest priority.

Enterprise Plans

| Plan |

|---|

| Monthly Fee/Person |

| Features |

| — |

| Team Standard |

| $25 (annual payment) / $30 (monthly payment) |

| Team collaboration, SSO, management backend, 5 users minimum |

| Team Premium |

| $150/person/month |

| Includes Claude Code, early access to new features |

| Enterprise |

| Quoted based on demand |

| Granular permissions, SCIM, audit logs, custom data retention policies |

API Billing

| Model |

|---|

| Input Cost (per million tokens) |

| Output Cost (per million tokens) |

| — |

| Haiku 4.5 |

| $1 |

| $5 |

| Sonnet 4.6 |

| $3 |

| $15 |

| Opus 4.6 |

| $5 |

| $25 |

In simple terms, if you are a developer using the Sonnet 4.6 API for general tasks (input 1,000 tokens + output 500 tokens), the cost per interaction is about $0.01, under NT$1.

More than just chatting: A complete tutorial on Claude’s core features

Many people still perceive Claude as “just another ChatGPT.” However, the series of features launched by Claude between 2025-2026 has already transformed it from a conversational tool into a work platform.

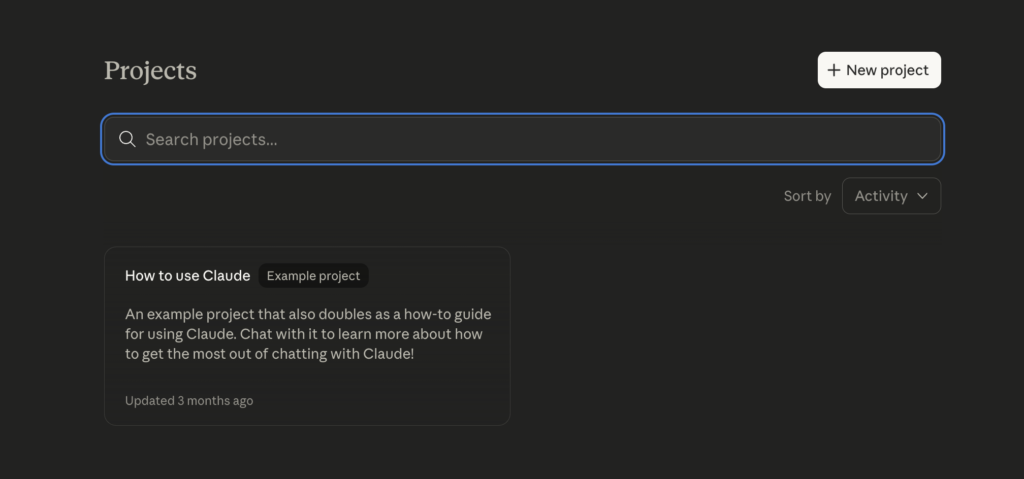

Feature 1: Projects

What is it? Projects allows you to create a dedicated workspace within Claude. You can upload documents (PDFs, code, spreadsheets), write background instructions, and initiate multiple conversations within this workspace. All conversations will automatically incorporate your preset documents and instructions.

Why is it important? Imagine you are an analyst researching fintech. You can create a “Fintech Research” project, upload 10 relevant industry reports and your analysis framework, and each time you open a new conversation, Claude already “knows” your background and needs. You don’t have to explain everything from scratch each time.

How to use it?

- Click on “New Project” in the left sidebar of Claude.

- Name the project (e.g., “Q1 Financial Report Analysis”).

- Upload relevant documents (knowledge base up to 200K tokens).

- Write Custom Instructions (e.g., “I am a financial analyst in Taiwan, please respond in Traditional Chinese, and cite data sources”).

- Open a conversation within the project, and all context is automatically loaded.

Feature 2: Artifacts

What is it? When you ask Claude to generate longer content—code, articles, charts, web pages—Claude will place the results in a separate panel next to the chat window. You can preview, edit, copy, or even download them directly.

Why is it important? Traditional AI chats clutter all outputs in a long string of conversations, making it hard to find a specific piece of code. Artifacts separate “outputs” from “conversations,” allowing you to focus on the results.

What can be generated?

- Complete HTML/CSS/JavaScript web pages (with real-time previews)

- React components

- SVG charts and infographics

- Mermaid flowcharts

- Python scripts

- Markdown long texts

- CSV spreadsheets

Practical tips: You can tell Artifacts, “Change this chart’s color scheme to dark mode” or “Add error handling to this code,” and Claude will update it directly in the panel instead of regenerating the entire document.

Feature 3: Memory

What is it? Claude automatically extracts important information from conversations: your name, profession, preferences, project background, and stores it for automatic application in future conversations.

Why is it important? This addresses a major pain point in AI chatting: starting over with every new conversation. With Memory, Claude behaves like a real assistant: it remembers who you are, what you do, and what style of responses you prefer.

As of February 2026, Memory has been made available to all users (including free users), which is a strategic decision by Anthropic to lower the barrier for transitioning from ChatGPT.

How to manage?

- Claude automatically remembers important information; you don’t need to operate manually.

- You can explicitly say, “Remember I am a software engineer in Taiwan, and I prefer using TypeScript.”

- You can view, edit, and delete Claude’s remembered content in the settings page.

- You can also say, “Forget everything you know about me” to clear the memory.

Feature 4: Extended Thinking

What is it? Extended Thinking allows Claude to undergo a “thinking” process before answering. You can see how Claude logically reasons through to the answer.

Why is it important? For problems requiring multi-step reasoning, such as math problems, logical reasoning, and debugging, Extended Thinking can significantly improve accuracy.

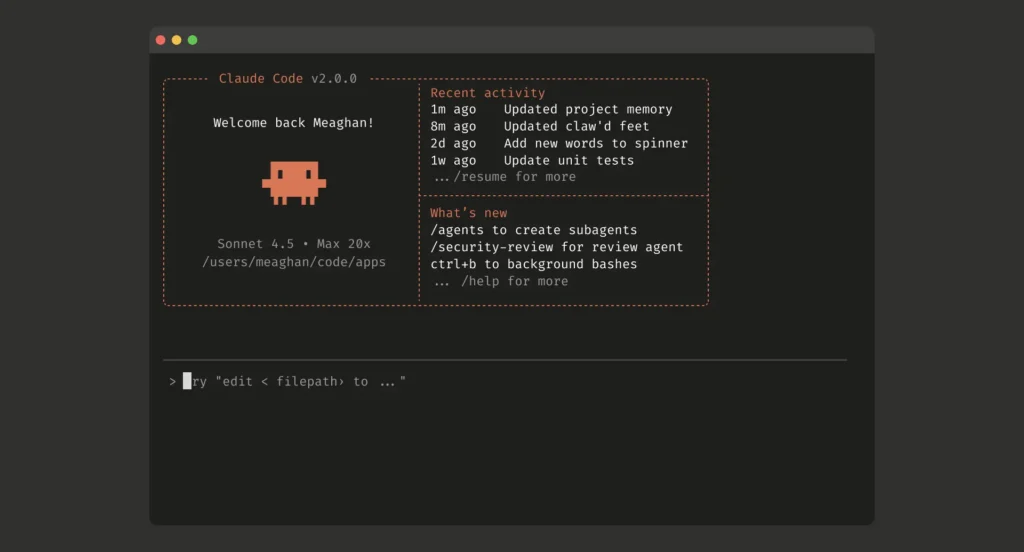

Claude Code: The terminal tool that engineers are addicted to

To be honest, using Claude in the web chat interface, for the average person, does not differ much from other competitors; however, Claude Code, specifically designed for engineers, is its recent standout weapon.

What is Claude Code? It is an AI development tool that runs directly in your terminal. You don’t have to leave your code editor or command line to let Claude help you code, debug, refactor, write tests, or perform Git operations.

In simple terms, Claude Code is like having a senior engineer inside your terminal. You describe what you need in natural language, and it directly works within your codebase.

Running environments:

- CLI (Command Line): Directly enter

claudein the Terminal to start. - VS Code Extension: Native integration in VS Code allows interaction within the editor.

- JetBrains Plugin: Native support for JetBrains IDEs like IntelliJ, WebStorm, etc.

- Desktop Application: A standalone desktop app.

- Web Version: Accessible at claude.ai/code.

Core capabilities:

- Multi-file editing: Claude Code can modify multiple files at once. For instance, if you say, “Change the error handling format for all API endpoints,” it will scan the entire project, identify all relevant files, and modify them one by one.

- Executing terminal commands: It can directly execute shell commands, run tests, install packages, and start development servers, eliminating the need to switch back and forth between Claude and the terminal.

- Git operations: Create branches, commit changes, push code, and create pull requests, all completed using natural language.

- Code understanding: You can ask it, “What does this function do?” “Why is this test failing?” “Does this piece of code have security vulnerabilities?” It will read the relevant files, analyze the context, and provide explanations.

- Agent Teams: A new capability introduced in Opus 4.6 that allows multiple Claude agents to work in parallel. For example, you can have one agent write front-end components while another writes back-end APIs, and a third writes tests.

Practical scenario examples:

You: "Migrate this Express.js project from JavaScript to TypeScript."

Claude Code: [Reads all .js files → Analyzes dependencies → Installs TypeScript → Creates tsconfig.json → Converts files one by one → Fixes type errors → Runs tests to confirm.]

You: "Find out why the CI pipeline is failing."

Claude Code: [Reads CI configuration → Views error logs → Analyzes failed tests → Identifies root cause → Proposes a fix.]

Cost: Claude Code is included in the Pro ($20/month) and higher plans. The free version does not support it. Team Premium ($150/person/month) also includes Claude Code.

If using the API, Claude Code’s cost is calculated based on API token usage.

Claude Code’s annual revenue has already reached $2.5 billion, with commercial subscriptions growing fourfold from early 2026. Many engineers report that once they use Claude Code, they will eventually upgrade to Max mode.

Claude Cowork: An AI agent for non-coders

What is Cowork? It is the desktop AI agent system that Anthropic launched on January 12, 2026. It does not run in a browser but operates directly on your computer, allowing access to your local files, opening applications, browsing the web, filling out forms, and completing multi-step tasks from start to finish.

In simple terms, Cowork is like hiring a digital assistant to sit in front of your computer and do your work.

Core capabilities:

- Local file access: You designate a folder, and Cowork can read, edit, and organize all the files within it. No need for manual uploads and downloads.

- Application operations: Cowork can open apps on your computer, operate the browser, and fill out spreadsheets directly.

- Multi-step task decomposition: You describe a complex task, and Cowork will automatically break it down into smaller tasks, process them in parallel, and then summarize the results.

- Scheduling tasks: Input

/schedulein any Cowork task to set automatic timed tasks. - Dispatch: You can continuously converse with Claude from your phone or desktop, assigning tasks anytime.

- Plugins: Plugins can add specialized skills, connectors, and sub-agents to Cowork, customizing for different roles and teams.

Practical scenario examples:

- Research report: “Organize the 20 PDFs in this folder into a summary report.”

- File management: “Classify, rename, and organize the files in the downloads folder by type into corresponding subfolders.”

- Document generation: “Generate an Excel analysis report with charts based on these raw data.”

- Email processing: “Organize important emails from the past week in the inbox and summarize action items.”

Current limitations:

- Still in research preview; stability is continuously improving.

- Supported on both macOS and Windows (Windows launched on February 10, 2026).

- Requires Pro or higher plans.

Cowork represents a clear trend: AI is evolving from “you ask, I answer” to “you say, I do.” The chat interface is the first generation of AI interaction, while agents are the second generation.

MCP: The USB-C of the AI world

What is MCP? It is an open standard launched by Anthropic in November 2024 that allows AI applications to securely connect to external systems, databases, cloud services, development tools, and enterprise software.

Anthropic used a very precise metaphor: MCP is the USB-C of AI.

Prior to MCP, every AI tool needed to develop a separate integration to connect to an external service. You had to create one integration for Google Drive, another for Slack, and yet another for GitHub. If there are 10 AI tools and 10 external services, that’s 100 integrations.

MCP’s approach is to define a standard interface. External services only need to build one MCP Server, and any AI tool that supports MCP can connect. Ten AI tools and ten external services require only 20 integrations.

Three core components:

- Tools: Functions that AI can call (e.g., “Search Google Drive,” “Create GitHub Issue”).

- Resources: Data that AI can read (e.g., file contents, database query results).

- Prompts: Predefined interaction templates.

Current ecosystem:

- Official connectors: Google Drive, Google Calendar, Gmail, Slack, GitHub, DocuSign, WordPress, FactSet, etc.

- Community connectors: PostgreSQL, Discord, Notion, MongoDB, Figma, and hundreds more.

- Supported AI tools: Claude, ChatGPT, VS Code, Cursor, JetBrains IDE, etc.

- Developers: Over 10,000 developers have adopted it within six months of release.

Developer building blocks: Claude API

If you are a developer looking to embed Claude’s capabilities into your applications, the API is your entry point.

Basic usage: Send requests through Anthropic’s Messages API, and Claude returns responses. Official SDKs are available for Python and TypeScript.

from anthropic import Anthropic

client = Anthropic()

message = client.messages.create(

model="claude-sonnet-4-6-20260217",

max_tokens=1024,

messages=[

{"role": "user", "content": "Explain what DeFi is."}

]

)

print(message.content[0].text)

Advanced features:

- Tool Use (function calls): You define a set of tools (functions), and Claude will automatically decide when to call which tool based on the conversation. For example, if you define a “check stock price” tool, when a user asks, “What is TSMC’s current price?” Claude will automatically call this tool to get real-time data and then form a response.

- Vision: The API supports image and PDF inputs. You can have Claude analyze screenshots, read documents, and understand charts.

- Streaming: Real-time word-by-word output of responses enhances user experience.

- Prompt Caching: If your multiple requests share the same system prompts or background documents, caching can save up to 90% on input token costs.

- Batch API: Large requests can be processed in batch mode at half the cost, suitable for offline tasks.

Best practices (from Anthropic’s official recommendations):

- Keep the number of tools to 8 or fewer to allow Claude to make more accurate judgments on when to use which tool.

- Spend time writing good descriptions for tools; this is more effective than just increasing the number of tools.

- Use Sonnet for general tasks, Opus for complex reasoning, and Haiku for high-speed responses.

Claude vs ChatGPT vs Gemini: Who to choose

This is a question everyone wants to ask. Below is a comprehensive comparison based on several independent evaluations conducted in 2026. (Different needs may yield different results; this is for reference only.)

| Dimension |

|---|

| Claude |

| ChatGPT |

| Gemini |

| — |

| Complex reasoning |

| ⭐⭐⭐⭐⭐ |

| ⭐⭐⭐⭐ |

| ⭐⭐⭐⭐ |

| Coding ability |

| ⭐⭐⭐⭐⭐ |

| ⭐⭐⭐⭐ |

| ⭐⭐⭐⭐ |

| Natural text quality |

| ⭐⭐⭐⭐ |

| ⭐⭐⭐⭐ |

| ⭐⭐⭐⭐ |

| Response speed |

| ⭐⭐⭐ |

| ⭐⭐⭐⭐ |

| ⭐⭐⭐⭐⭐ |

| Context length |

| 200K (Opus 4.6 version 1M) |

| 128K |

| 1M |

| Safety |

| Constitutional AI |

| RLHF |

| Google safety framework |

| Monthly fee |

| $20 and up |

| $20 and up |

| $19.99 and up |

The most honest advice: Each of the three tools has its strengths, and another smart approach is to switch models if you feel the quality of a particular model isn’t sufficient.

Who is Anthropic: A $380 billion empire built by defectors

After discussing the tools, let’s talk about the company behind Claude. Understanding Anthropic’s origins helps explain why Claude has taken a different path from other AIs.

In 2021, Dario Amodei was the VP of research at OpenAI, and his sister Daniela Amodei served as VP of safety policy. Both were at the core of the world’s most scrutinized AI laboratory, holding the training data, architecture designs, and, crucially, the first-hand knowledge of the risks associated with these systems.

But they chose to leave.

Not due to technical disagreements, but because of ideological conflicts. The Amodei siblings believed that the AI industry was overly focused on performance, neglecting safety and interpretability. While everyone was racing to build larger, faster, and stronger models, they asked a different question: What if we solve safety issues first, and then pursue capabilities?

In 2021, Dario and Daniela, along with seven core researchers from OpenAI, founded Anthropic.

Constitutional AI: Giving AI a constitution

Anthropic’s core philosophy can be summarized in one term: Constitutional AI.

In December 2022, Anthropic published a research paper proposing a novel AI training method. The traditional approach involves many human labelers telling the model “which answers are good and which are bad,” a method known as RLHF (Reinforcement Learning from Human Feedback). The problem is that human labelers have inconsistent judgment standards, and scaling this is costly.

Anthropic’s approach is different. They give the model a “constitution,” a set of principles written in natural language covering safety, ethics, compliance, and other aspects. The model then evaluates its own responses against these principles and adjusts itself based on the evaluation results.

In simple terms, it’s like no longer hiring 100 tutors to supervise one student, but instead giving the student a rulebook to determine which behaviors are correct.

In January 2026, Anthropic released the new constitution for Claude. This constitution clearly prioritized Claude’s behavior: Safety > Ethics > Compliance > Helpfulness to users. In other words, even if a user requests Claude to do something, if that action is unsafe, Claude will refuse.

This design philosophy has given Claude a unique position in the market: it may not be the most obedient AI, but it could be the most trustworthy AI.

Financing and numbers

From a capital standpoint, the market has clearly responded positively.

Anthropic’s financing history could serve as a venture capital textbook. In May 2023, it raised $450 million in Series C funding. In September 2023, Amazon announced an investment of up to $4 billion (later increased to $8 billion). In October 2023, Google led an investment of $2 billion. In 2024, a series of funding rounds skyrocketed its valuation. By February 2026, Anthropic completed a $30 billion Series G round led by Coatue and Singapore’s sovereign fund GIC, reaching a valuation of $380 billion.

This is the second-largest private fundraising round in history, second only to OpenAI’s over $40 billion.

The list of investors reads like a power map of the tech industry: Amazon, Google, Microsoft, Nvidia, D.E. Shaw, Founders Fund, ICONIQ. Notably, Microsoft invested in both OpenAI and Anthropic: this is not a contradiction, but a hedge.

As of March 2026, Anthropic’s annual revenue has already exceeded $14 billion (some estimates even suggest it is nearing $19 billion). The number of enterprise customers spending over $100,000 annually has grown sevenfold in the past year, with over 500 clients spending more than $1 million annually.

The seven researchers who left five years ago built an empire.

Safety is not a restriction, it’s a moat

Looking back, when Dario Amodei left OpenAI with seven others in 2021, almost no one believed that “AI safety” could become a business. The mainstream narrative at that time was: speed is everything; bigger models are better; get something out first.

Five years later, Anthropic’s valuation stands at $380 billion.

Behind this number is a counterintuitive business logic: In the field of AI, safety is not a cost; it is the foundation of trust. And trust is the core reason why enterprise customers are willing to pay.

In 2022, everyone was asking what AI could do. By 2026, more people began to ask, “Can AI be trusted?”…

Anthropic news summary

.2026/3/27

Anthropic’s new model “Claude Mythos” exposed as the strongest in history, with network attack capabilities raising concerns even among insiders.

.2026/3/26

Anthropic AI Economic Index thousands of words report: Automated trading workflow frequency doubled, Claude is transitioning from a tool to a life assistant.

.2026/3/24

Anthropic introduces computer control + mobile remote dispatch features: Claude can directly operate your Mac now.

.2026/3/22

Anthropic releases open-source financial analysis plugin: 41 Skills for one-click stock research, financial report analysis, wealth management…

.2026/3/20

Claude Code launches Channels: Able to converse with AI agents on Telegram, Discord, Anthropic faces off against openclaw lobsters.